Fake Reviews: How Common They Are, How to Spot Them, and What the Law Says Now

Last updated: April 21, 2026

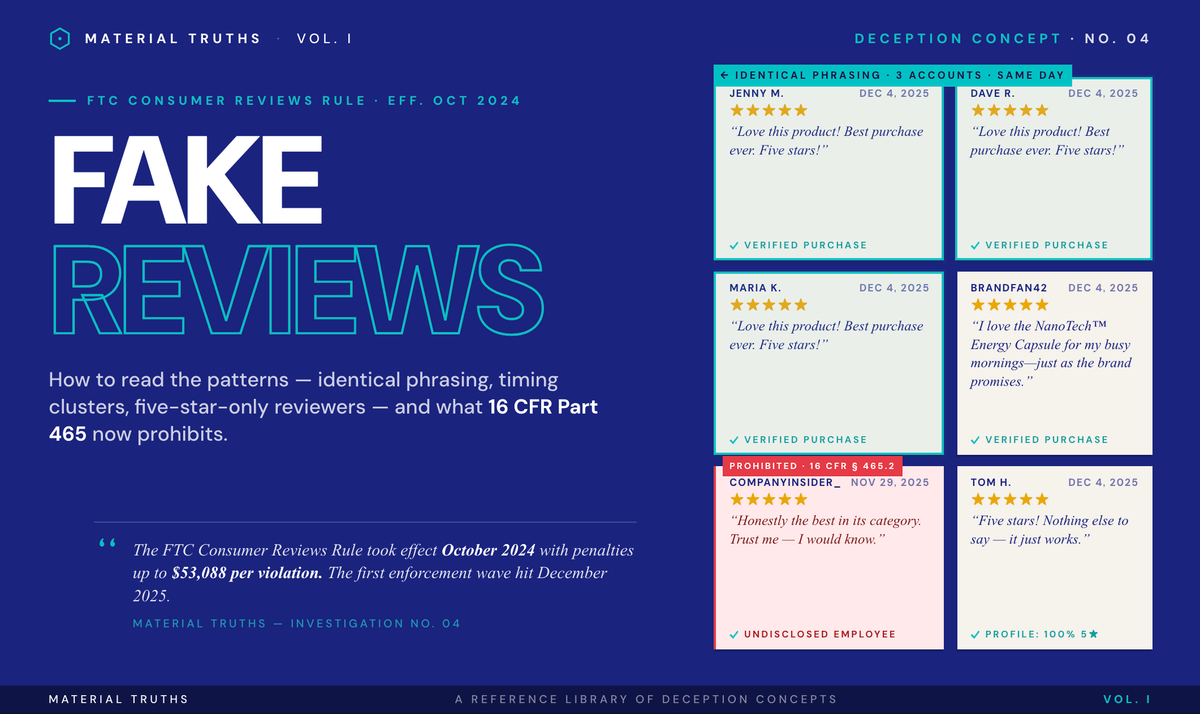

Fake reviews are fabricated or manipulated consumer testimonials designed to mislead buyers about a product's quality, popularity, or legitimacy. They include AI-generated reviews, paid reviews from non-customers, insider reviews without disclosure, and suppressed negative reviews. The FTC's Consumer Reviews and Testimonials Rule, effective October 2024, made most forms of review manipulation illegal under federal law for the first time — with penalties of up to $53,088 per violation.

What are fake reviews and how prevalent are they?

Fake reviews span a spectrum from obvious fraud to subtle manipulation. At one end: five-star reviews from accounts that never bought the product, generated in bulk by paid reviewers or AI. At the other end: real consumers incentivized to write positive reviews, employees reviewing their own products, or brands suppressing negative reviews through legal threats. The FTC treats all of these as actionable.

Prevalence estimates vary by methodology. Independent analyses of Amazon bestsellers have found that roughly 43 percent of top-selling products contain reviews flagged for inauthenticity by algorithmic detection. Broader studies of e-commerce review authenticity suggest that 30 to 40 percent of reviews on major platforms show at least one indicator of manipulation. Platform-reported removal figures are consistent with this scale: Amazon, Google, Yelp, and TripAdvisor have each reported removing tens of millions of suspected fake reviews annually.

The economics explain the prevalence. Review manipulation is cheap — a verified review from a review farm costs between $5 and $25. The return on investment for brands is substantial. Products with strong review profiles sell significantly more than products without them. On platforms like TikTok Shop and Amazon, reviews function as the primary quality signal for consumers who cannot physically inspect products before purchase.

How does the FTC's fake review rule work?

The Consumer Reviews and Testimonials Rule, codified at 16 CFR Part 465, went into effect on October 21, 2024. The rule prohibits six categories of conduct:

- Fake consumer reviews and testimonials. Creating, selling, or buying reviews from people who do not exist, have no experience with the product, or misrepresent their experience. This explicitly includes AI-generated reviews presented as authentic.

- Incentives conditioned on sentiment. Offering compensation or other benefits contingent on a particular sentiment — even offering a gift card only for five-star reviews violates the rule, regardless of whether the brand discloses the incentive.

- Undisclosed insider reviews. Reviews by officers, managers, employees, or their immediate family without clear disclosure of the relationship.

- Company-controlled review websites. Creating sites that falsely present themselves as independent review platforms when they are owned or controlled by the brand.

- Review suppression. Using unfounded legal threats, intimidation, or false public accusations to suppress negative reviews. This does not prevent legitimate defamation actions, but groundless threats meant to silence reviewers are prohibited.

- Fake social media influence indicators. Buying or selling fake followers, views, or engagement metrics where the brand knows (or should know) the indicators are fabricated.

Enforcement authority sits with the FTC. There is no private right of action — consumers cannot sue under the rule directly, though state consumer protection laws and class actions remain available. Violations carry civil penalties of up to $53,088 per violation (2025 adjustment), and each misleading review can be treated as a separate violation.

The FTC issued its first public enforcement action under the rule on December 22, 2025, sending warning letters to 10 companies identified through consumer complaints. The letters demanded immediate cessation of non-compliant practices and documented remediation within compressed timelines — signaling the FTC's intent to move from warning to formal enforcement rapidly when compliance is not demonstrated.

What tools existed to detect fake reviews (and why Fakespot shut down)

For roughly a decade, consumer-facing fake review detection was dominated by two tools: Fakespot and ReviewMeta.

Fakespot was founded in 2015, operated as a browser extension and mobile app that analyzed Amazon, eBay, Walmart, and other e-commerce reviews, and developed a substantial user base. Mozilla acquired Fakespot in May 2023 and integrated its functionality into Firefox. In July 2025, Mozilla shut Fakespot down, stating that the product did not fit a model the company could sustain. The closure removed the most widely used consumer-facing review analysis tool from the market.

ReviewMeta operated similarly and remained active through the mid-2020s but has become largely inactive.

The shutdowns left a significant gap. Consumers now have fewer automated tools for assessing review authenticity at the moment of purchase. Manual verification and platform-level filtering remain the primary options. The gap is part of what Material Truths' Trust Grade system is designed to eventually address.

How can consumers spot fake reviews manually?

No single indicator proves a review is fake. Multiple indicators together strongly suggest inauthenticity. A practical checklist:

1. Timing clusters. If a product has 50 five-star reviews all posted within a 48-hour window, the pattern is almost certainly coordinated. Authentic reviews accumulate over time.

2. Generic language. Authentic reviews tend to mention specific product characteristics, use cases, or personal context. Fake reviews often rely on generic superlatives ("Amazing product! Great quality!") without detail that would require actual experience.

3. Review-only accounts. A reviewer account with hundreds of reviews, all five-star, all on products from the same brand or category, is a red flag. Authentic reviewers typically have mixed ratings across varied products.

4. AI-generated tells. AI-generated reviews often feature unnaturally polished grammar, repetitive phrase structures, and hallucinated product features. The FTC rule explicitly includes AI-generated reviews as prohibited fake reviews.

5. Stock or AI-generated profile images. Reverse image search (Google Images, TinEye) can identify stock photos or AI-generated faces used as reviewer profiles.

6. Sentiment extremes without nuance. Authentic satisfied customers usually acknowledge some product limitations. Reviews that are uniformly positive with no caveats are suspicious, as are uniformly negative reviews posted by a competitor's attack campaign.

7. Verified purchase badges. On Amazon specifically, "Verified Purchase" confirms the reviewer bought the product through Amazon — but does not confirm they were not paid or incentivized to write the review. The badge is necessary but not sufficient.

8. Cross-reference reviews across platforms. If a product has glowing reviews on the brand's own site but poor reviews on Trustpilot, BBB, or third-party platforms, the brand-controlled reviews are likely manipulated.

Which platforms have the biggest fake review problems?

Each platform has its own manipulation patterns:

- Amazon. Review manipulation networks operate through closed Facebook groups, Telegram channels, and WeChat groups, often coordinating reviews in exchange for free products plus payment. Amazon reports removing hundreds of millions of suspected fake reviews annually.

- TikTok Shop. Relatively new to the U.S. market (expanded significantly since 2023), TikTok Shop has minimal review verification infrastructure compared to Amazon. Creator-affiliate reviews often blur the line between authentic endorsement and paid promotion.

- Google (Maps, Reviews). Local business reviews have been a major fraud vector, including competitor review attacks and review-farming by SEO firms.

- Yelp. Has the longest-established review filtering algorithm, which removes approximately 25 percent of submitted reviews. Controversies have emerged about both legitimate reviews being filtered and manipulated reviews surviving.

- Trustpilot. Self-reported customer feedback with business response features. Multiple reports have documented businesses soliciting exclusively positive reviews while reporting negative ones as violations.

Recent FTC enforcement actions on fake reviews

The FTC's enforcement activity on reviews has accelerated significantly since the rule took effect.

- December 22, 2025: The FTC sent warning letters to 10 unidentified companies, its first public enforcement wave under the rule. The letters identified suspected violations including fake reviews, sentiment-conditioned incentives, and undisclosed insider reviews, demanding remediation documentation within days.

- Pre-rule enforcement examples that remain instructive: The FTC brought significant cases against Fashion Nova for allegedly suppressing negative reviews and against Vision Path for allegedly paying for positive reviews. These cases established the enforcement framework the current rule codifies.

Class actions have also emerged. Courts have generally held that false advertising theories under state consumer protection laws can reach review manipulation even without the new federal rule.

This section is updated as new enforcement actions are documented.

Frequently asked questions

How common are fake reviews? Research suggests 30-40% of reviews on major platforms show signs of inauthenticity. On Amazon, an estimated 43% of bestselling products have unreliable reviews.

Are fake reviews illegal? Yes, under the FTC's Consumer Reviews and Testimonials Rule effective October 21, 2024, with penalties up to $53,088 per violation.

How do I spot a fake review? Look for timing clusters, generic language, review-only accounts, AI tells, stock profile images, sentiment extremes, and cross-platform discrepancies.

What is the FTC's fake review rule? 16 CFR Part 465, effective October 21, 2024, prohibiting six categories of review manipulation.

Do fake reviews still exist on Amazon? Yes. The rule creates liability for perpetrators but does not require platforms to verify review authenticity.

What happened to Fakespot? Acquired by Mozilla in 2023, shut down in July 2025, leaving a gap in consumer-facing review verification tools.

Further reading

- Astroturfing: Adjacent manipulation tactic often combined with fake reviews

- Sciencewashing: Broader pattern of marketing deception

- FTC Consent Order: What happens when a brand signs a settlement

Sources

- FTC. "Trade Regulation Rule on the Use of Consumer Reviews and Testimonials." 16 CFR Part 465. Federal Register, August 22, 2024.

- FTC. "The Consumer Reviews and Testimonials Rule: Questions and Answers." ftc.gov/business-guidance/resources/consumer-reviews-testimonials-rule-questions-answers

- FTC Business Blog. "A warning letter (or ten) for businesses: comply with the FTC's Consumer Review Rule." December 22, 2025.

- Mozilla. Announcement of Fakespot shutdown, July 2025.

Get notified when we add new enforcement examples to this page.